How to Build a Test Automation Strategy

Once the decision has been made to roll out test automation, the next question is: “How are you actually going to do this?” In IT, you have two choices: skip a few days of planning and deal with weeks of issues, or invest time building a solid strategy to save precious time during sprints and enhance long-term efforts.

This article explains how to create and implement a robust test automation strategy so that your QA team efforts are channeled in the best way.

Skip ahead to:

What is a software test automation strategy?What’s the purpose of a test automation strategy?

What happens without an automated testing strategy in place?

What’s the purpose of a test automation strategy?

The benefits of a test automation strategy

How to build a test automation strategy

Test automation strategy example

Conclusion

As software development accelerates, the importance of test automation keeps on rising. Quality assurance teams are turning to automation to streamline workflows and boost the efficiency and dependability of their testing efforts.

Central to these endeavors is a robust test automation strategy, and having a roadmap for change.

What is a software test automation strategy?

A software test automation strategy is a detailed plan outlining your approach to implementation. It should serve as your playbook (which should be continually updated and refined) for improving your software testing processes through automation.

A test automation strategy should mirror your broader testing strategy, applying similar techniques and data points to determine what to automate, how to do it, and which technology to use.

Like any testing strategy, it defines key focus areas, including scope, goals, types of testing, tools, test environment, execution, and analysis.

This guiding document ensures your automation efforts align with broader software development project goals and contribute to the project's success.

What’s the purpose of a test automation strategy?

The main goal of a test automation strategy is to offer a structured and clear plan for implementing automation in your software testing process. It outlines the goals, scope, processes, and approach for automation, ensuring it aligns with the overall objectives of the software development project.

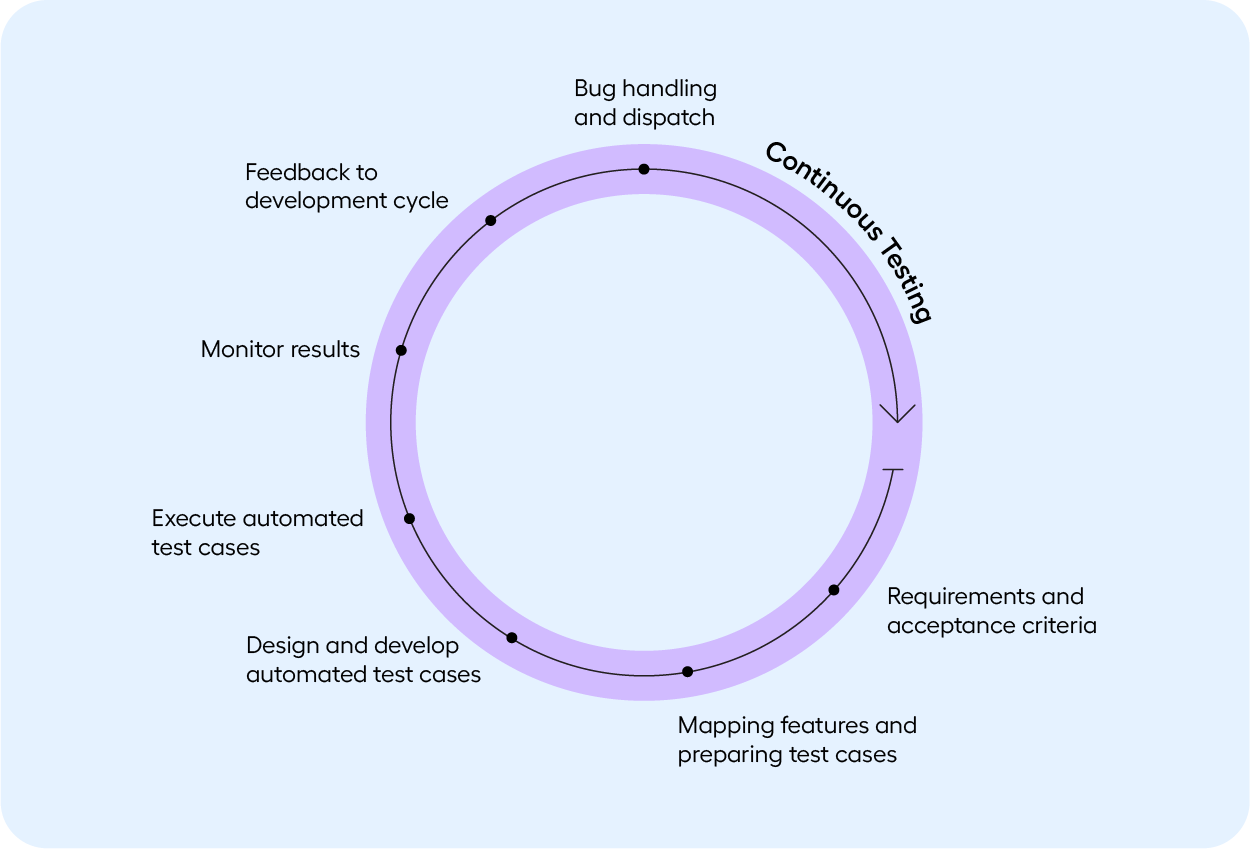

It aims to harmonize automation efforts with the project's overall objectives by integrating continuous testing into the development cycle. This makes testing more efficient, finds cost savings, and enhances software quality.

What happens without an automated testing strategy in place?

During the automation adoption phase, challenges are common in different testing stages, so having a strategy in place is essential.

Transitioning from manual testing to automated testing without a clear strategy can result in potential issues for your software development and testing process.

Here are some of the consequences and challenges you might encounter without an automated testing strategy:

- Lack of test coverage: Without a plan for which tests to automate, you may not achieve the desired test coverage, potentially leaving critical test scenarios untested or under-automated.

- Integration challenges: Integrating automated tests into your CI/CD pipeline or development process can be challenging without a strategy, leading to delays in providing developer feedback.

- High maintenance burden: Without a strategy, you may end up with a disorganized and unmanageable suite of automated tests. This results in a high test maintenance overhead, making it challenging to keep the automated tests up to date as the application evolves.

- Demotivated team: Frustration can set in among the QA and development teams if the automation process is disorganized, leading to demotivated team members and resistance to automation efforts.

- Unclear business value: It becomes challenging to measure the ROI of test automation when you don't have a strategy in place, making it unclear whether automation is providing value. No organization supports something that doesn’t get business value

- Increased risk of abandonment: Without a well-defined strategy, your organization is more likely to abandon the automation effort due to the difficulties and lack of visible progress.

The benefits of a test automation strategy

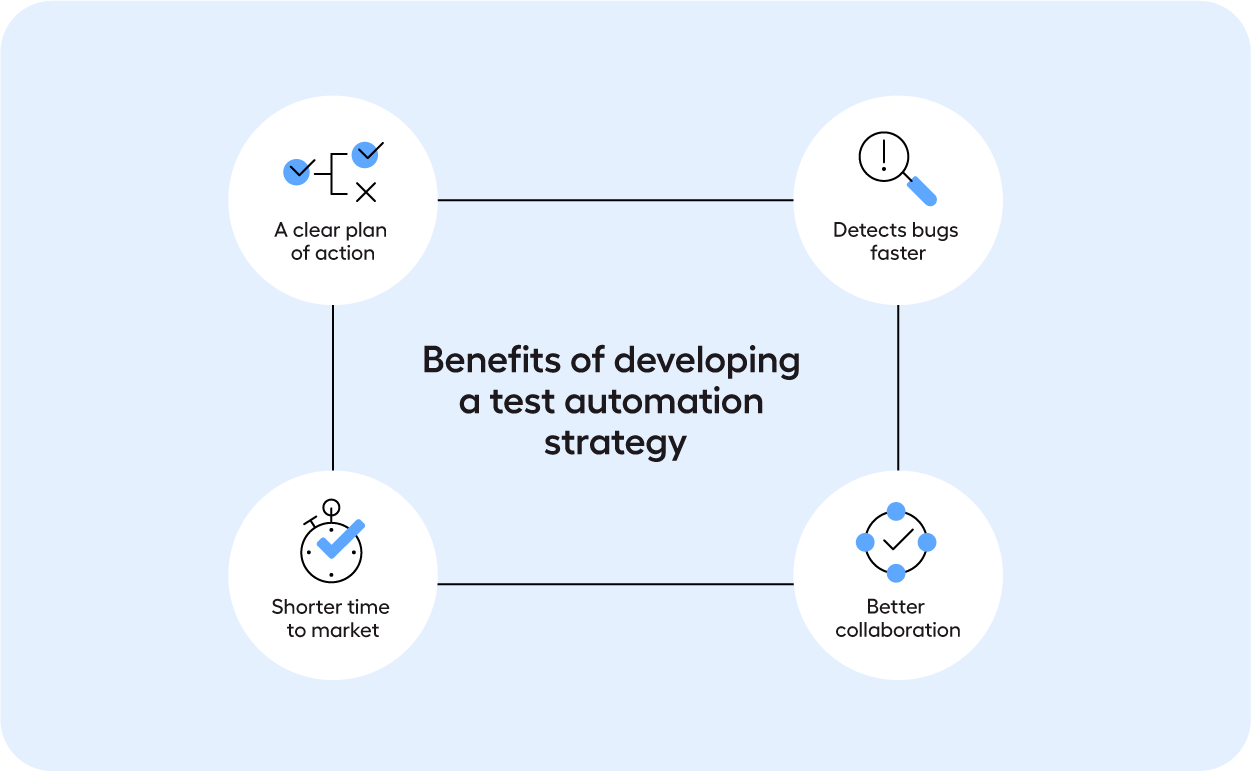

A well-crafted test automation strategy is a cornerstone of effective software testing. This strategic perspective not only streamlines the testing process but also fosters a culture of efficiency, quality, and adaptability in today's fast-paced software development landscape.

A clear plan of action

The main benefit of developing a test automation strategy is it provides a clear plan of action for implementing automation in a structured and effective way.

This structured approach leads to several key advantages, including faster detection of bugs, improved collaboration, and shorter time to market.

Faster detection of bugs

A well-defined test automation strategy ensures that testing processes are streamlined and automated. Automated tests can be executed more quickly than manual tests, allowing for the rapid identification of software bugs and issues.

Automation enables testing to occur continuously throughout the software development lifecycle, from the early stages of development to post-release. This early and consistent testing helps catch bugs at their inception, reducing the cost and effort required to fix them.

Better collaboration

An automation strategy establishes clear objectives and roles for team members involved in testing. It defines responsibilities, processes, and communication channels. This clarity fosters better collaboration among developers, testers, and other stakeholders.

Automated tests provide consistent and repeatable results, reducing the ambiguity and disputes that can arise from human interpretation. Team members then have complete trust in the test results and can focus on resolving identified issues.

A test automation strategy defines a process for communicating goals and plans to stakeholders. This sparks important discussions around new proofs-of-concept or technologies to implement within your organization.

Shorter time to market

Automated tests offer rapid feedback on the quality of the software, enabling timely adjustments and corrections. This leads to a more efficient development process with shorter feedback loops.

With automated testing, you can perform regression testing swiftly, ensuring that new features or changes don't introduce new defects. This accelerates the release cycle and allows for more frequent product releases.

Shortening the time to market is crucial in today's fast-paced software development landscape. Organizations that can release high-quality software faster gain a competitive edge and meet customer expectations more effectively.

How to build a test automation strategy

We have compiled a list of areas to focus on when building your test automation strategy. Focusing on each of these areas will allow you to build a comprehensive strategy that includes key phases in the automation test life cycle and essential considerations for successful implementation.

1. Testing tools

Selecting the right tool defines the success of your test automation strategy and will make or break your test automation project. For this reason, it’s the first step in this list.

Every organization is unique, with varying people, processes, and technologies. Each QA team faces different challenges while working with various applications in diverse environments. Therefore, aligning tool requirements with these setups is crucial.

Consider costs, application support, and usability when determining the right tool. Shortlist tools that meet your specific requirements.

2. Scope

Defining a project’s scope from an automation perspective involves setting timelines and milestones for each project sprint.

In this stage, you clearly define which tests to automate and which to keep doing manually. Not all test cases can or should be automated.

Utilize the 80/20 rule (this is explained in our test strategy checklist) to prioritize tests, concentrating on repetitive and mission-critical scenarios.

3. Test automation approach

When choosing a test automation approach, consider three areas: Processes, technology, and roles.

Define structured processes for developing automated test cases, maintaining them, and analyzing their results. Select appropriate tools and technologies that align with the applications being automated.

Define the roles and responsibilities of team members, designating roles like Automation Lead, Automation Engineer, and Automation Architect.

4. Objectives

To measure the success of test automation, you should set clear objectives that align with your business goals. These objectives should be ambitious but realistic.

Common objectives in test automation are:

- Reducing execution time

- Increasing throughput

- Improving test coverage

- Reducing bugs that reach production

- Improving customer satisfaction

- Increasing ROI

5. Risk analysis

Risk analysis is an essential aspect of project planning, particularly within automation.

This analysis involves creating a list of identifiable risks with the following details:

- Description and relevant metadata

- Severity: What will happen if the risk becomes a reality? How hard will it hit the project?

- Probability: What is the likelihood of this happening?

- Mitigation: What can be done to minimize the risk?

- Cost estimate: What is the cost of mitigating the risk, and what is the cost of not doing it?

6. Test automation environment

A stable and predictable test environment is essential for generating reliable automation results. This environment should closely replicate production environments to reflect what end users will experience.

Consider the following aspects related to test environments:

- Where will you store the test data?

- Can a copy of production data be used? This is necessary in some industries

- Can production data be masked?

- Should test cases clean up data after use?

7. Execution plan

An execution plan should outline the day-to-day tasks and procedures related to automation.

Select test cases for automation based on the criteria defined in part 2 of this list. Before any automated test cases are added to your regression suite, they should be run and verified multiple times to ensure they run as expected. False failures are time-consuming, so test cases must be robust and reliable.

8. Test naming convention

A consistent test naming convention is a simple yet impactful way to create an effective testing framework.

Here’s what a test naming convention should contain:

- Test case no/ID

- Feature/Module

- A brief description of what the test case verifies

9. Release control

In a release pipeline, regardless of its complexity and maturity, there is a point when a team needs to decide whether to release a build or not.

Parts of this decision-making can be automated, while others require a human touch, so the final release decision will often be based on a combination of computed results and manual inspection.

Establish clear criteria for passing, such as ensuring that all automated regression tests pass and evaluating application test logs. Whatever the criteria look like in your business, it needs to be clearly defined.

10. Failure analysis

Having a plan for analyzing failing test cases and taking necessary actions is a crucial, yet sometimes overlooked, part of a test automation strategy.

The time it takes from when a tester is notified of a failing test case until the fix is described, understood, and accepted in the development backlog, is usually much longer than teams anticipate. Having a well-defined process for this can save a lot of time and frustration for a development team.

11. Review and feedback

Finally, once you’ve made a draft of a test automation strategy, make sure to have it reviewed and approved by relevant stakeholders in the development team.

Foster a culture of continuous learning and improvement by embracing feedback from stakeholders, peers, and team members. Lessons learned during software automation should be captured and documented for future reference. Continuously enhance your test automation strategy based on these insights.

For a more in-depth strategic document, download our Ultimate Strategy Checklist for Test Automation in 2024. This guide delves deep into critical aspects such as testing tools, automation approach, risk analysis, and execution planning. It also has examples and worksheets so you can create an actionable plan with your team for improving QA through test automation in 2024.

Test automation strategy example

Investec, a specialist bank and wealth manager, faced challenges in its complex IT landscape, including legacy systems and web-based technologies, which made test coverage resource-intensive and time-consuming.

Manual testing was taking 3-4 weeks for every feature release, hindering business agility. By implementing test automation through a comprehensive test automation strategy, Investec automated tests for various technologies, including the core mortgages platform and web-based applications.

Integration of Leapwork's test automation platform into their CI/CD pipeline allowed for faster and 24/7 test execution, enhancing productivity and reducing risk. With the regression suite fully automated, Investec experienced a 3-4 times faster time to market.

Key achievements:

- 95% automated regression tests

- Tests execution 20% quicker and run 24/7

- Achieved a 3-4 times faster time to market.

Conclusion

Adopting a test automation strategy is about more than just new tools or processes; it's about embracing a mindset that prioritizes efficiency, quality, and teamwork.

Understanding the what, why, and how of test automation strategies enables organizations to optimize their QA efforts in line with their broader software development objectives.

As shown by Investec’s success, a well-devised test automation strategy can lead to quicker releases, better software quality, and ultimately, higher customer satisfaction.