Drive ROI Across Your Stack

Get more out of every system, every workflow, and every team.

Faster Releases

Productivity Increase

Fewer Defects

Faster Time to Value

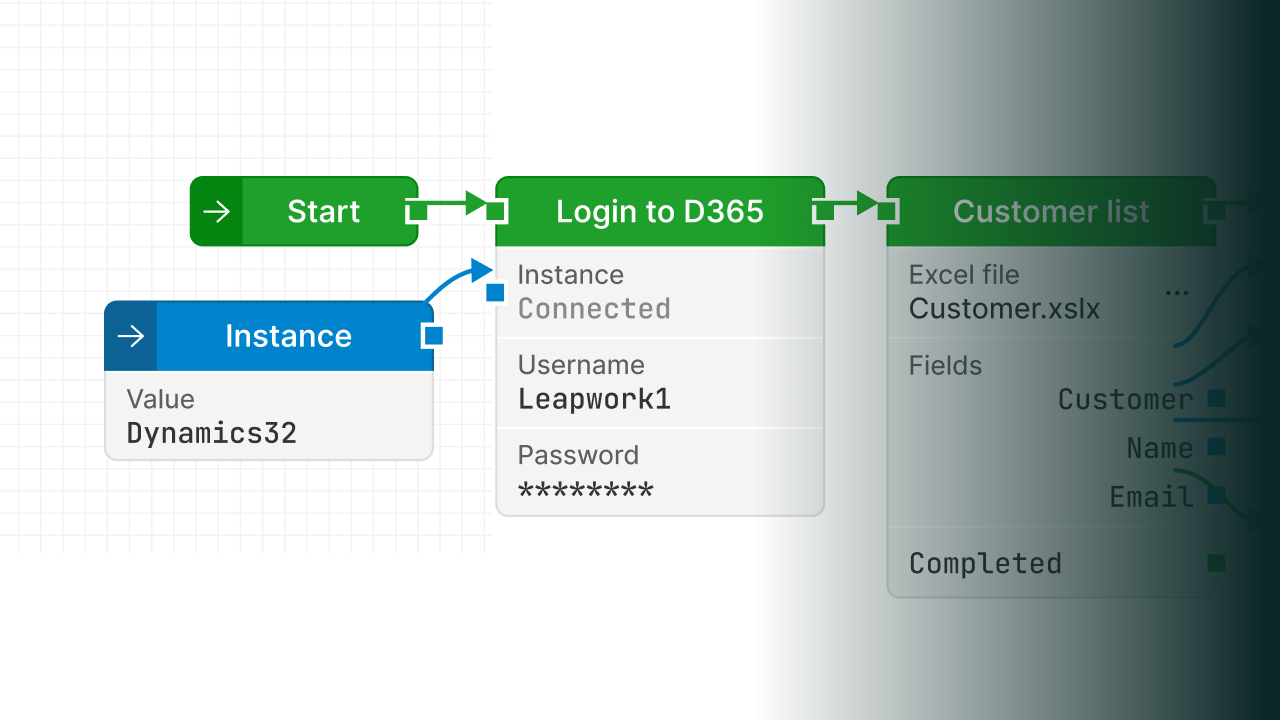

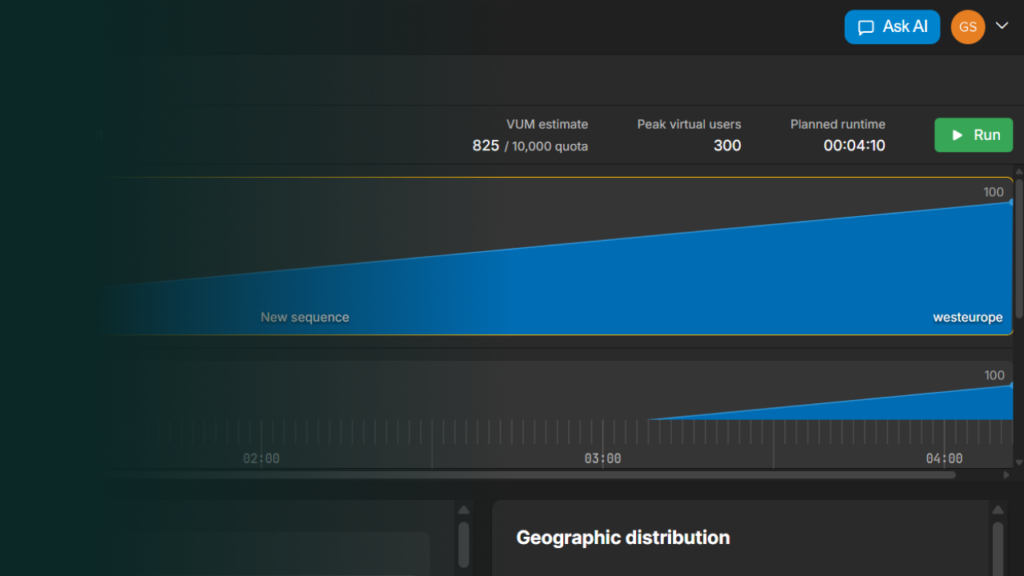

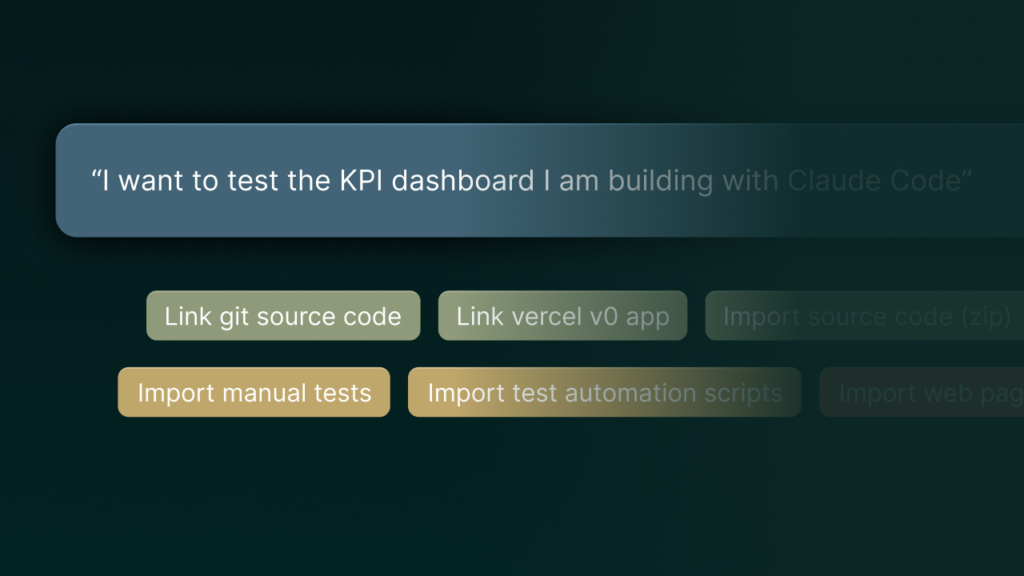

Continuous Validation Platform

Agentic, Application Agnostic, and Deterministic by Design

Validation Across Industries

Financial Services

Release faster without increasing risk across payments, trading, & reporting systems. Leapwork gives teams the confidence to move quickly while staying ahead of impact across connected systems.

Healthcare + Pharmaceutical

Bring products and updates to market faster while maintaining full compliance and traceability. Leapwork enables speed without compromising audit readiness or patient-critical systems.

Retail + eCommerce

Keep every release live through traffic spikes, promotions, and constant change. Leapwork ensures the full customer journey stays uninterrupted across storefront, inventory, and fulfillment.

Manufacturing

Move faster across planning, production, & supply chain systems without disrupting operations. Leapwork keeps everything aligned as changes progress.

Logistics + Transportation

Keep operations moving in real-time as routes, schedules, and conditions shift. Leapwork maintains system coordination, so everything stays on track.

Software + SaaS

Ship continuously without introducing instability across services & integrations. Leapwork keeps systems aligned as they evolve, so teams can move fast.

Enterprise Customer Stories

Leapwork has earned the trust of the world’s most complex enterprises across every industry for over a decade

Built for Mission-Critical Enterprise Applications

Leapwork connects to the systems that run your business, so validation covers all of them.

Our Partners

We work with leading partners, IT consultants, technology vendors, and training experts across the globe to to drive shared success.