Stop testing your patience

Eliminate Bottlenecks. Accelerate ROI.

Speed

Ship faster without breaking what runs the business

Quality

Every system works when it matters most

Productivity

Turn AI speed into real output across teams

Governance

Traceable results and audit ready

Confidence

Know every release works before it hits production

Coverage

From legacy to AI native and everything in between

Resilience

Prove performance under real world pressure

Scalability

Validation that keeps up with AI-driven delivery

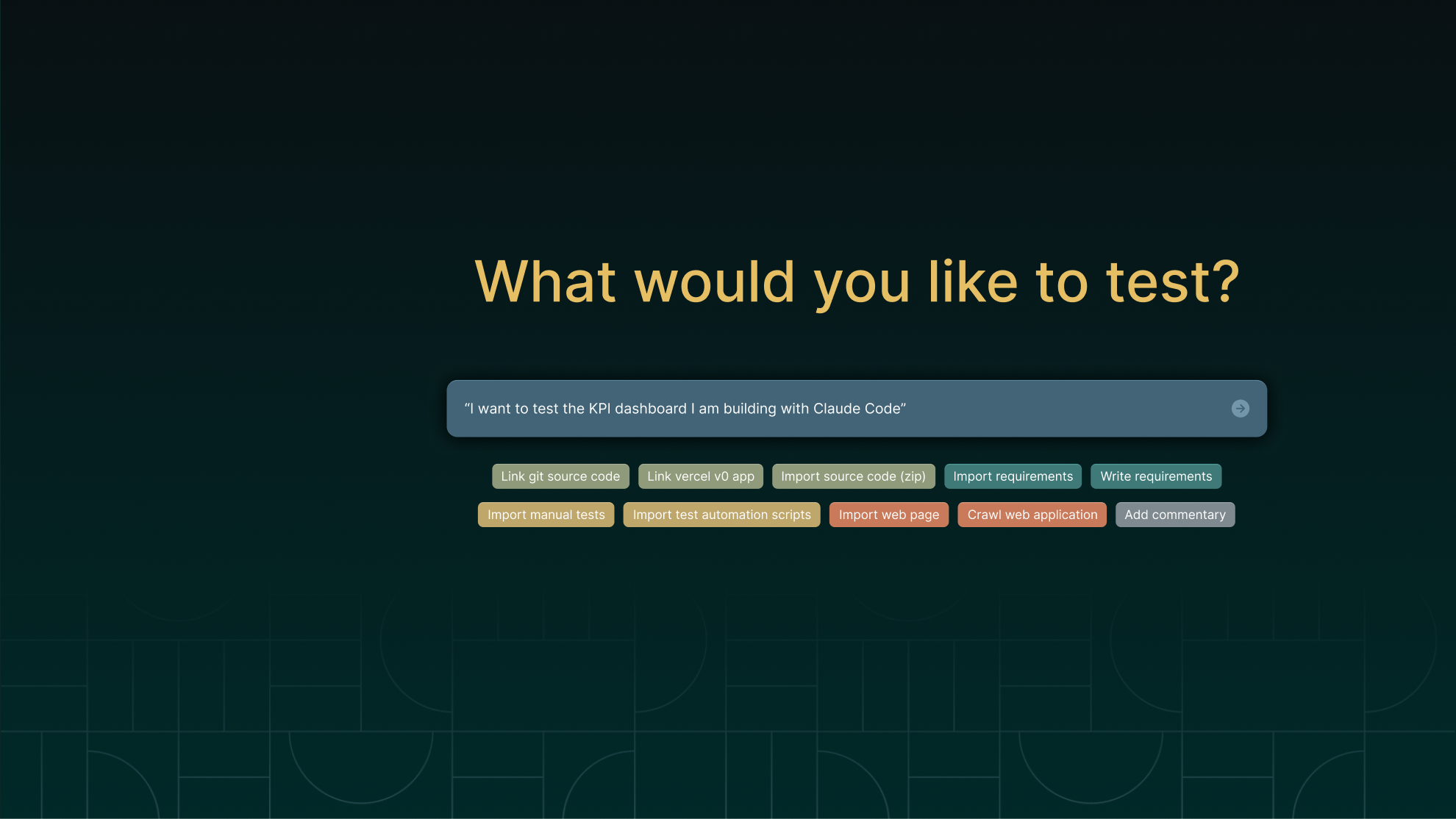

AI Your Way™

We have earned the trust of the world’s most complex enterprises for over a decade, so we understand what it takes to validate systems that work together and how AI fits into that reality. We built agentic capabilities into the platform, and our AI Your Way™ approach lets teams layer in additional controls and leverage existing AI frameworks. You get the speed of agentic AI, with the flexibility to make it work across systems that span AI-native to legacy constrained, and everything in between.

Application Agnostic

Validate across web, desktop, ERP, SaaS, and AI native applications with one platform across your full enterprise estate.

Deterministic by Design

Proven in regulated industries, with explainable logic, audit trails, and governed results that build confidence in every release.

Sophistication with Ease

Handle the most complex enterprise environments with an elegance that accelerates how teams deliver quality.